MCP Is Where AI Risk Becomes Real

Ask most security teams where AI risk resides, and you’ll hear familiar answers: prompt injections, hallucinations, model misuse. That framing was born in the first wave of AI deployments, when LLMs were mostly generating text. Way back then (sometime last year), AI outputs carried real risk - but there was still a layer of human intervention between output and execution.

That layer is now disappearing - and with it, the confidence that deployments are secure. In a recent survey, only 36% of security and technology leaders said their security capabilities keep pace with AI, while roughly 90% of companies think they cannot defend against AI-driven threats.

The reasons? To start, today’s AI security architecture was built for a time when governance revolved around content - what a model produced, not what it could initiate.

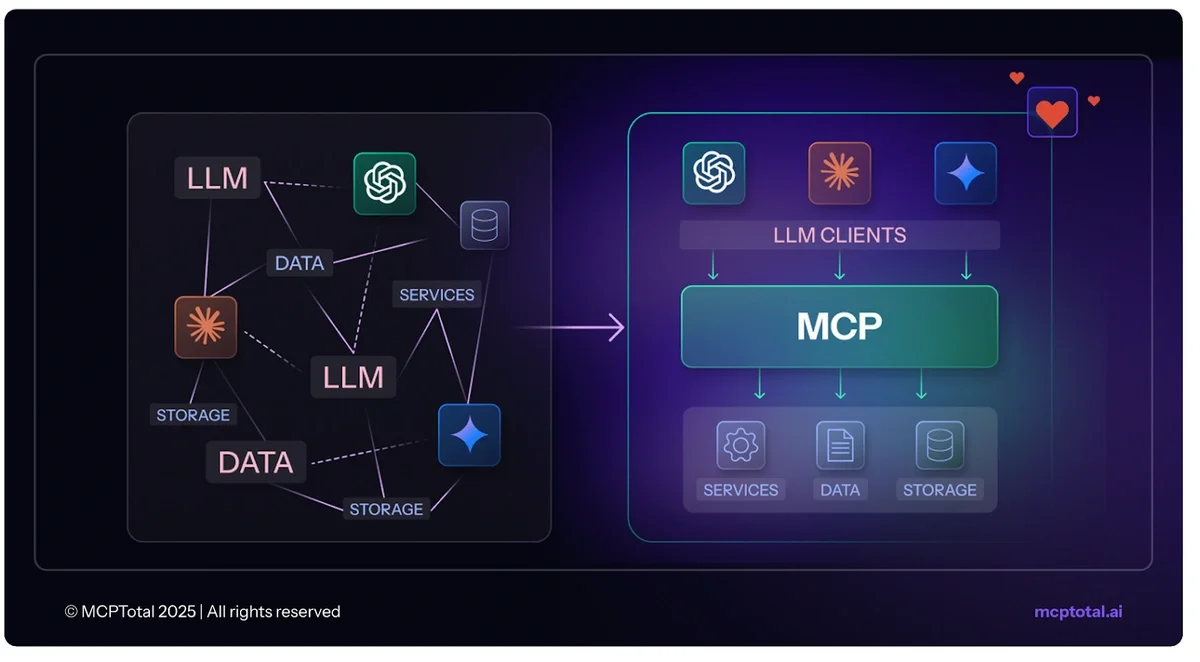

Today, AI has crossed a line. Agents now trigger workflows, call APIs, and interact with live data using production credentials. The LLM is now the starting point for system-level actions, most of which flow through Model Context Protocol. MCP changes the nature of AI risk. In this blog, we’ll take a look at why this and what security leaders can do about it.

From Insight to Execution (and Risk), Powered by MCP

Today’s AI risk begins where LLM output initiates system activity - from accessing files and updating records to calling APIs and launching workflows across tools. Each step inherits trust from the previous one, and a single output can touch multiple systems downstream.

The flexibility of these workflows comes from how quickly they evolve and how easily they run across disconnected tools - like shared folders, unmanaged APIs, and third-party plugins. Yet that same adaptability introduces risk, since even minor changes in logic or metadata can trigger unintended effects across systems that were never designed to coordinate. According to recent research, 1 in every 35 generative AI prompts carries a high risk of sensitive data leakage - a pattern that affects 87% of organizations deploying GenAI at scale.

And all this complexity is facilitated by MCP. It handles tool selection, data transfer, and system interaction behind the scenes. It determines how model outputs reach execution paths and what those paths include. MCP is the de facto logic layer between generation and action – which makes it also the potential source of problems and the vehicle by which they spread.

Why This Layer Escapes Visibility

If MCP sits at the center of AI execution, why doesn’t it show up in today’s security workflows? The answer lies in how AI agent environments are built and deployed.

MCP servers often run on developer laptops, containers, or browser clients - outside formal provisioning, with live credentials and no centralized control. Developers commonly use tools like Cursor, OpenAI SDKs, or browser-based agents to quickly connect models to internal systems.

The problem is that traditional security tools don’t see this layer. There’s no inventory of MCP servers, no logging of tool usage, and no inspection of traffic between agents and business systems. Activity moves across fragmented environments that lack a single point of control. This carries a price tag. In most organizations, sensitive data is broadly accessible to AI tools – some 99% have exposed files that can be retrieved, processed, or misused by GenAI systems, and all without formal safeguards in place.

This makes MCP a form of Shadow AI - productive, fast-moving, yet almost entirely untracked. And the problem isn’t just technical – it’s organizational, too.

You should schedule a meeting with us to scan your endpoints and get a report showing all MCP clients and servers with the related security risk. It's pretty cool to see it and it's all agentless.

Why This Risk Persists Inside the Organization

Most organizations have not yet defined where MCP belongs. It doesn’t fall neatly under model governance, which focuses on prompts and responses. It doesn’t sit fully within infrastructure, since many deployments never become formalized or centrally managed. And it doesn’t register in application security, because there is often no application to review.

This lack of ownership mirrors what breach data already shows: according to IBM 63% of organizations have no formal AI governance policy in place or were still developing one. That absence reflects the broader gap discussed above - most systems today were built to evaluate inputs and secure boundaries, not to govern what happens after outputs are triggered. MCP reshapes those boundaries, but there are few mechanisms in place to reflect that change. Until someone is responsible for the execution layer itself, the risk it generates will keep slipping through the cracks.

MCP as the New Control Point for AI Risk

The lack of ownership, visibility, and governance around agent activity has created a critical gap - but also a clear opportunity.

MCP brings structure to agent workflows by defining how tasks are executed, which tools are involved, and how data moves between systems.

That structure makes MCP a natural control point.

MCP activity follows a defined path. It passes through known servers, runs on identifiable machines, and touches well-monitored systems. These are observable surfaces, even if they haven’t been treated that way yet.

Security teams that anchor visibility in this layer can reduce guesswork. They can trace how agent actions unfold, apply guardrails where it counts, and begin establishing governance over how those actions are carried out.

This is exactly why we created MCPTotal. Our platform consolidates agent activity into a single managed environment, enforces policy at the point of execution, and gives security teams real-time visibility into how MCP is used across desktop, browser, and cloud environments. What was once invisible becomes auditable, controllable, and safe to scale.

The Bottom Line

AI risk becomes real the moment outputs lead to action. That execution path runs through MCP - the layer that connects agents to the systems, services, and data they rely on. It’s already part of how teams build and deploy AI-driven automation, but it often lacks review or control.

MCP decides how model output reaches production systems and shapes what agents do once they’re live. That makes it the right place to introduce structure and governance.

MCPTotal makes that possible. It brings oversight to the execution layer and turns untracked agent activity into something secure, visible, and manageable. When you start with system-level visibility, security can keep pace with adoption – and keep close track of where the real risk lives.

MCP is where AI risk becomes real. MCPTotal makes that risk visible and controllable. Get started now.